François Glineur

Research – Publications – Courses – Students

|

Full professor at Member of the Member of the Address: UCLouvain / ICTM / INMAEuler building new!, office a124 (first floor) ; directions ; map Francois.Glineur@uclouvain.be |

|

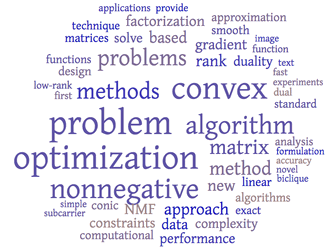

Research interests

Convex optimization

| Nonnegative matrix factorization

|

Recent talks

- Performance estimation of optimization methods: a guided tour, given at NOPTA 2004 in Antwerp (April 2024): slides

Publications

An up to date list of publications can be found on Google Scholar or on the UCLouvain institutional repository. Some recent preprints can be found on arXiv.Erdös #.Research group

Research group in 2021 (picture with P.-A. Absil's group -- from left to right: Teodor, Yassine, Hazan, Nizar, Pierre-Antoine, Guillaume O, François, Cécile, Simon, Philémon, Valentin, Guillaume VD, Loïc)

PhD students

- Visiting PhD student Zhicheng Deng (2023-2024), China Scholarship Council.

- Sofiane Tanji (2021-), TraDE-OPT H2020 ITN).

- Nizar Bousselmi (2021-), FRIA grant, co-supervised with Julien Hendrickx.

- Philémon Beghin (2021-), UCLouvain teaching assistant, co-supervised with Anne-Emmanuelle Ceulemans.

- Teodor Rotaru (2021-, Global PhD Partnership KU Leuven - UCLouvain, co-supervised with Panos Patrinos.

- Yassine Kamri (2021-, TraDE-OPT H2020 ITN, co-supervised with Julien Hendrickx.

- Guillaume Van Dessel (2019-, UCLouvain teaching assistant.

Completed PhDs

- Cécile Hautecoeur (2018-2022, SeLMA EOS project),

profile.

profile.

PhD thesis Nonnegative Matrix Factorization using Parametrizable Functions

Nonnegative Matrix Factorization using Parametrizable Functions ,

,  link.

link.

- Valentin Hamaide (2018-2022, BidMed project, BioWin cluster région wallonne, co-supervised in part with Benoît Macq),

profile.

profile.

PhD thesis Data-driven learning and optimization approaches for proton therapy

Data-driven learning and optimization approaches for proton therapy ,

,  link.

link.

- Julien Dewez (2013-2021, DYSCO IAP grant and UCLouvain teaching assistant),

profile.

profile.

PhD thesis Computational Approaches for Lower Bounds on the Nonnegative Rank ,

,  link.

link.

- Benoît Martin (2013-2018, FRIA grant, co-supervised with Emmanuel De Jaeger),

profile.

profile.

PhD thesis Autonomous microgrids for rural electrification: joint investment planning of power generation and distribution through convex optimization ,

,  link.

link.

- Adrien Taylor (2012-2017, FRIA grant, co-supervised with Julien Hendrickx),

profile, now research scientist at INRIA, see his home page.

profile, now research scientist at INRIA, see his home page.

PhD thesis Convex Interpolation and Performance Estimation of First-order Methods for Convex Optimization , ICTEAM thesis award 2018

, ICTEAM thesis award 2018 , IBM Innovation award 2018

, IBM Innovation award 2018 , Mathematical Optimization society Tucker prize finalist

, Mathematical Optimization society Tucker prize finalist ,

,  link.

link.

- Arnaud Latiers (2012-2016, Doctiris grant, co-supervised with Emmanuel De Jaeger),

profile.

profile.

PhD thesis Autonomous Frequency Containment Reserves from Energy Constrained Loads: A system perspective , SRBE-KVBE Robert SINAVE thesis award 2016

, SRBE-KVBE Robert SINAVE thesis award 2016 ,

,  link.

link.

- Olivier Devolder (2009-2013, FNRS grant, co-supervised with Yurii Nesterov),

profile.

profile.

PhD thesis Exactness, Inexactness and Stochasticity in First-Order Methods for Large-Scale Convex Optimization , ICTEAM thesis award 2014

, ICTEAM thesis award 2014 ,

,  link.

link.

- Jonathan Denies (2008-2013, FSR+FRIA grant, co-supervised with Bruno Dehez and Hamid Ben Ahmed),

profile.

profile.

PhD thesis Métaheuristiques pour l'optimisation topologique : application à la conception de dispositifs électromagnétiques ,

,  link.

link.

- Nicolas Gillis (2007-2011, FNRS grant),

profile, now professor at Université de Mons, see his home page.

profile, now professor at Université de Mons, see his home page.

PhD thesis Nonnegative Matrix Factorization, Complexity, Algorithms, Applications , ICTEAM thesis award 2012

, ICTEAM thesis award 2012 , Householder Award XV (2011-2013)

, Householder Award XV (2011-2013) ,

,  link.

link.

- Robert Chares (2005-2009, FSR+FRIA grant),

profile.

profile.

PhD thesis Cones and Interior-Point Algorithms for Structured Convex Optimization involving Powers and Exponentials ,

,  link.

link.

Postdoctoral collaborators

- Moslem Zamani (2023-, SeLMA EOS project), working on Performance estimation of first-order optimization methods.

- Yu Guan (2018-2020, SeLMA EOS project), working on Graph regularized tensor completion.

- Sebastian Stich (2014-2016, ARC Big Data and SNF grants), working on Large-scale optimization.

- Augustin Lefèvre (2012-2013, IAP Dysco), working on Informed source separation.

- Nicolas Gillis (2012-2013, FNRS grant), working on Constrained low-rank matrix and tensor approximations: complexity, algorithms, and applications.

- Paschalis Tsiaflakis (2011), Francqui intercommunity grant), worked on Optimization in Wireless MIMO Relay Networks.

Master students

-

Click here to display students before 2017.

- Diego Eloi, Worst-case functions for the gradient method with fixed variable step sizes (2022)

- Augustin d'Oultremont, A parser for auto-formalization in education (2022)

- Félix de Patoul, Predictive maintenance: predict upcoming failures via machine learning (2022) (with V. Hamaide)

- Tomasz Kwasniewicz, Implementation of a semidefinite optimization solver in the Julia programming language (2021)

- Nizar Bousselmi, Newton's problem : A smooth minimum method (2021)

- Marie Hartman and Alexia De Poorter, Automated estimation of performance of optimization methods (2021) (with J. Hendrickx)

- Andres Zarza Davila, Proof of concept of an interactive theorem prover system using natural language input (2021)

- Philémon Beghin, A digital tool at the service of organology : validation of a photogrammetric approach (2021) (with P. Fisette, A.-E. Ceulemans)

- Fabio Mercurio, Neural networks and gradient boosting for predictive maintenance of a proton therapy machine (2020) (with V. Hamaide)

- Olivier De Boeck, Convex optimization with inexact second-order oracles (2020)

- Jonas Dubois, Block term tensor decomposition by numerical optimization (2019) (with G. Olikier)

- Antoine Daccache, Performance estimation of the gradient method with fixed arbitrary step sizes (2019)

- Renaud Lothaire, Characterization of violins : a digital tool at the service of organology (2019) (with P. Fisette, A.-E. Ceulemans)

- Guillaume Van Dessel, Stochastic gradient based methods for large-scale optimization: review, unification and new approaches (2019) (with S. Stich)

- Brieuc Pinon, Learning through Optimization Programs (2019)

- Thibault Etienne, Beamforming of large antenna arrays with nulling using Lp-norms for sidelobe minimization (2018) (with C. Craeye)

- Jos Zigabe, Automatic scheduling for EPL lab sessions (2018) (with C. Poncin)

- Loïc Van Hoorebeeck, Calibration of the SKA-low antenna array using drones (2018) (with C. Craeye)

- Simon-Pierre Cordonnier, Identification of the optimal glass type for building (2018) (with T. Timmermans (AGC))

- Virginie Mathy, Industrial Process Optimization: Towards Energy Cost Minimization (2018) (with A. Latiers)

- Adrien Brogniet and Charles Ninane, Construction of an Automated Examination Timetabling System for École Polytechnique de Louvain (2017)

- Dylan Meynaert, Performance Estimation of First-Order Methods (2017)

- Valentin Hamaide, Optimal interference nulling for large arrays of coupled antennas (2017) (with C. Craeye)

- Ludovic Fastré, Automatic scheduling for master thesis defenses (2017) (with P. Schaus)

- Sébastien Lagae, New efficient techniques to solve sparse structured linear systems, with applications to truss topology optimization (2017) (with Y. Nesterov)

- Nicolas Boutet, Developing a symmetrical version of the quasi-newton least square algorithm (2017) (with R. Haelterman (ERM))

Courses currently taught

- LEPL1102 Analyse I (in French). Link to the course description and the course home page.

- LINFO1111 Analyse (in French). Link to the course description and the course home page.

- LEPL1105 Analyse II (in French). Link to the course description and the course home page.

- LINMA1702 Modèles et méthodes d'optimisation I (in French). Link to the course description and the course home page.

- LINMA2471 Optimization models and methods II. Link to the course description and the course home page.

- LINMA2120 Applied mathematics seminar. Link to the course description and the course home page.