eBPF in IETF protocols

In 1992, Steven MacCanne and Van Jacobson proposed the BSD Packet Filter <https://www.tcpdump.org/papers/bpf-usenix93.pdf> as an efficient technique to implement flexible packet filters in the tcpdump packet capture tool. In a nutshell, BPF specifies a small instruction set which be used to write programs that are executed on the captured packets to determine which packets can be matched. BPF is the standard solution to build powerful packet filters in tcpdump and related tools.

Almost 25 years later, Alexei Starovoitov used a similar approach to design extended BPF (eBPF). eBPF is an extension of the original BPF instruction set which is supported by a virtual machine, a Just-In-Time compiler and a verifier inside the Linux kernel. With eBPF, it becomes possible to add executable bytecode inside the Linux kernel to tune its behavior without having to recompile the kernel or use modules. eBPF is now widely used inside the Linux kernel and there are many use cases.

eBPF has also been ported to Microsoft Windows. To encourage the adoption of eBPF in other use cases than the Linux kernel, the IETF has chartered the BPF/eBPF working group to specify the basic features of this technology, namely the eBPF Instruction Set, the expectations for the verifier, …

eBPF should not be limited to operating system kernels such as Linux and Windows. There are many other use cases where the extensibility of eBPF can be beneficial. The IETF spends a lot of efforts to maintain and slowly extend widely deployed protocols. In the last years, we have demonstrated that by leveraging eBPF inside protocol implementations, it is possible to make them much more flexible. Here are a few examples that illustrate the flexibility that eBPF can bring to IETF protocols.

A first example is IPv6 Segment Routing or SRv6. David Lebrun has implemented SRv6 in the Linux kernel and Mathieu Xhonneux added eBPF features to this implementation to make it easier to extend.

Our second example is the utilization of eBPF to monitor the networking stack. This is one of the classical use cases for eBPF today. Olivier Tilmans proposed the COP2 set of eBPF programs to monitor the performance of the networking stack and export the collected metrics using IPFIX.

Our third example is TCP. TCP is the standard reliable transport protocol for many applications. Viet-Hoang Tran showed that it is possible to leverage eBPF to extend both TCP and Multipath TCP by adding new TCP options in the Linux TCP stack. Mathieu Jadin took a different approach and proposed the TCP Path Changer (TPC). The TPC leverages eBPF and IPv6 Segment routing to allow a TCP stack to react to different types of events by moving established TCP connections to a different path.

QUIC is a new IETF protocol that replaces the classical TLS over TCP stack. It is already widely deployed by cloud providers. Quentin De Coninck, Maxime Piraux, Francois Michel showed in Pluginizing QUIC that it is possible to use eBPF inside a QUIC implementation to support various protocol extensions including Multipath, VPN or Forward Erasure Correction.

Our last example is BGP. BGP is the most important Internet routing protocol. It slowly evolves. With xBGP, Thomas Wirtgen demonstrated that by adding eBPF support in the BIRD and FRRouting implementations it becomes possible for network operators to develop their own extensions to the BGP protocol and execute them as eBPF program.

Once the IETF BPF/eBPF working group has finished the standardization of the basics of eBPF the IETF should start to discuss the utilization of eBPF inside various Internet protocols.

Welcome to the NCS blog

There are now six research groups within the ICTEAM institute at UCLouvain that are active in Networking, Cybersecurity and Systems in general. You can find information about there recently published articles of these six research groups on https://ncs.uclouvain.be

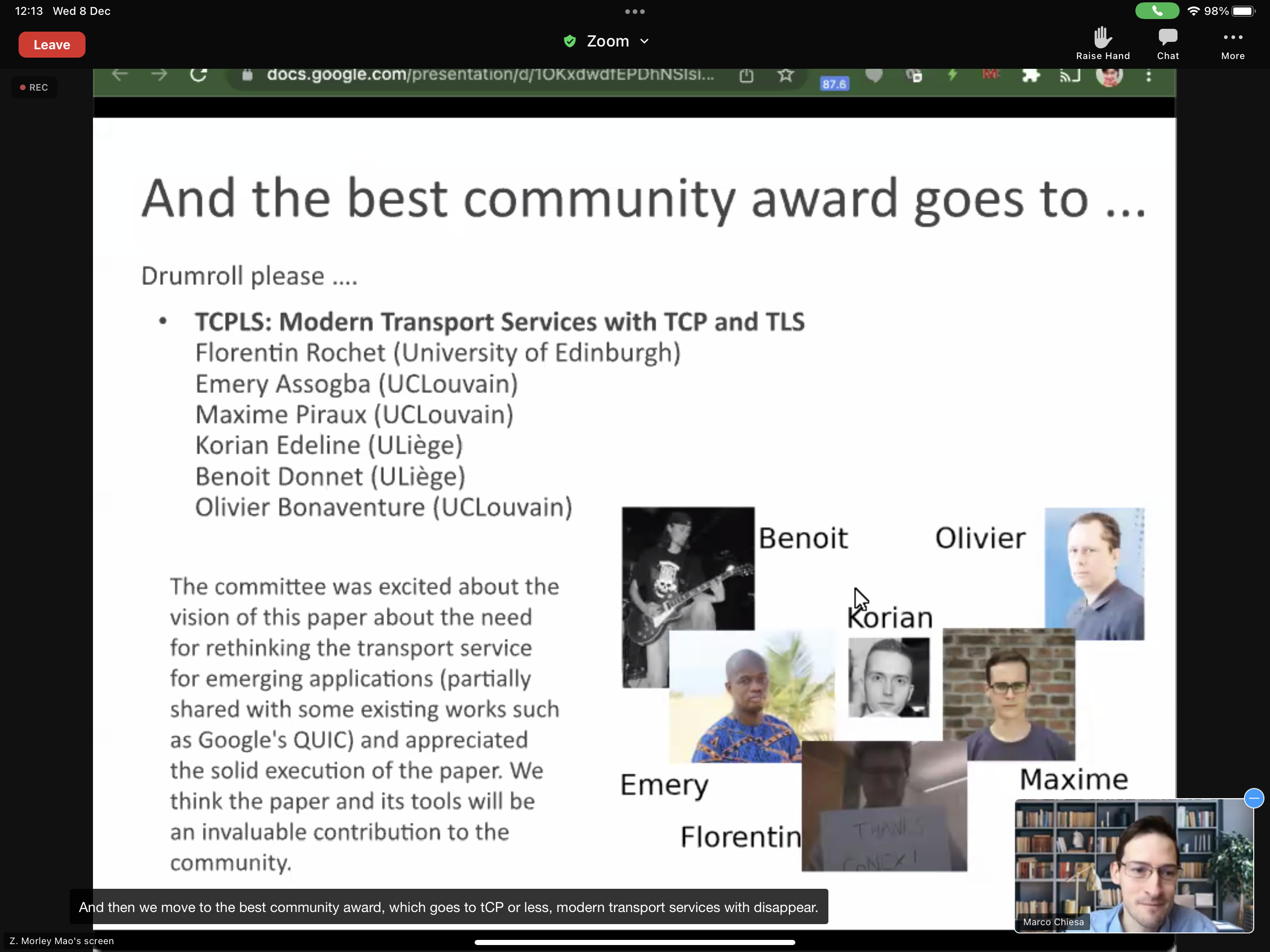

Conext’21 community award for TCPLS

Our latest article TCPLS: Modern Transport Services with TCP and TLS written by Florentin Rochet in cooperation with Emery Assogba (UAC, Benin), Maxime Piraux, Korian Edeline (ULiege), Benoit Donnet (ULiege) and Olivier Bonaventure received the community award at Conext’21.

The committee was excited about the vision of this paper about the need for rethinking the transport service for emerging applications and appreciated the solid execution of the paper. The `TCPLS source code <https://github.com/frochet/tcpls_conext21`_ is freely available to encourage other researches to expand the work.

2021 ANRP award for xBGP

Every year, the Internet Research Task Force awards the Applied Networking Research Prize (ANRP) to recognise the best recent results in applied networking, interesting new research ideas of potential relevance to the Internet standards community. In late 2020, 78 papers published in networking conferences and journals were nominated. Our xBGP paper, xBGP: When You Can’t Wait for the IETF and Vendors written by Thomas Wirtgen (UCLouvain), Quentin De Coninck (UCLouvain), Randy Bush (Internet Initiative Japan & Arrcus, Inc), Laurent Vanbever (ETH Zürich) and Olivier Bonaventure (UCLouvain) was selected with five other articles. It will be presented by Thomas Wirtgen at a forthcoming IETF meeting.

This is the fourth time that one of our papers is selected by the IRTF for an ANRP.

A podcast on Multipath TCP, the coolest protocol you’re already using but didn’t know

A few weeks ago, I had the opportunity to chat with Derick Winkworth and Brandon Heller for the seekingtruthinnetworking During this conversation, we first discussed about the evolution of Multipath TCP that they consider as one of the coolest networking protocols. We discussed some of the lessons learned while designing and deploying Multipath TCP and also the current use cases that it enables. Apple uses Multipath TCP to improve user experience with seamless handovers for applications like Siri, Apple Maps or Apple Music. In parallel, Tessares pioneered the deployment of hybrid access networks that use Multipath TCP to combine xDSL and cellular to provide faster Internet services in rural areas in several countries. The 0-rtt convert protocol designed for this use case has been adopted by 3GPP for the Access Traffic Steering, Switching & Splitting (ATSSS) functions that enables 5G devices to efficiently combine 5G with 5G. We also discussed our recent research results on pluginizing Internet protocols and the open-source Computer Networking : Principles,Protocols and Practice ebook.

You can listen to this podcast from https://seekingtruthinnetworking.com/podcast/episode-7-olivier-bonaventure/

It is also available on Apple podcasts and Spotify

Two Hotnets papers

Hotnets is a yearly SIGCOMM workshop that focuses on new and innovative research ideas that could be start new research directions.

During Hotnets’20, we present two papers that propose new ideas for two of the key Internet protocols: BGP and TCP.

The first paper is entitled xBGP: When You Can’t Wait for the IETF and Vendors. It was written by Thomas Wirtgen, Quentin De Coninck, Randy Bush, Laurent Vanbever and Olivier Bonaventure. Since the early days of BGP, network operators have proposed changes and improvements to the protocol and its implementation. This has resulted in many discussions within the IETF and among network vendors to identify the extensions that need to be implemented and standardized. In this paper, we propose a very different approach. Since BGP implementations will evolve, we consider each BGP implementation as a kind of micro-kernel that exposes a vendor-neutral API which can be used by network operators to extend the protocol and develop protocol extensions. We implement this new idea on two different BGP implementations. To enable network operators to develop a single extension that runs on any implementation, we embed an eBPF virtual machine inside each BGP implementation. This virtual machine executes the protocol extensions that we call plugins. We use plugins to implement four very different BGP extensions.

The second paper is entitled TCPLS: Closely Integrating TCP and TLS. It was written by Florentin Rochet, Emery Assogba and Olivier Bonaventure. During the last years, the IETF has devoted a lot of effort to standardize QUIC: a new transport protocol that combines the main functions of the TLS and the transport layer (reliability, congestion control, …) but runs above UDP so that it can be implemented inside userspace libraries. Despite the growing interest in QUIC, TCP remains the most popular Internet transport protocol. In this paper, we should that by combining TCP and TLS together, it is possible to design and implement a transport protocol that has much better properties than TCP alone, but still benefits from TCP’s advantages compared to QUIC. We implement a TCPLS prototype and use it to demonstrate the benefits that TCPLS could bring to current and future Internet applications.

These two articles are part of a broader effort that aims developing a new methodology to design and implement Internet protocols. See https://pluginized-protocols.org/ for additional information and pointers to our current prototypes.

Bringing Multipath capabilities in QUIC

During the last years, the use cases for Multipath TCP have continued to grow. Multipath TCP is used on all iPhones to provide seamless handovers and improve performance for Siri, Apple Music and other applications. This successful deployment has encouraged 3GPP to adopt it for the ATSSS service that will future 5G smartphones to seamlessly use Wi-Fi and cellular networks. Multipath TCP is also used by network operators to deploy hybrid access networks that combine cellular and xDSL networks.

In parallel with this deployment, the IETF has finalised the specification of the QUIC protocol. In a nutshell, QUIC combines the functions that are typically found in the transport and TLS layer in a single protocol that runs above UDP so that it can be implemented as a userspace library. When the QUIC working group was chartered, we argued for the inclusion of multipath capabilities in this new protocol. This item was added to the charter and we proposed a first design for Multipath QUIC.

The QUIC working group spent more time than expected on designing the protocol and we adapted the design of Multipath QUIC to the different changes to the protocol. The latest version of Multipath QUIC is cleaner and better adapted to QUIC. The multipath requirements remain and QUIC version only provides a poor man’s solution with its connection migration capabilities that have not yet been evaluated in the field.

On October 22nd, 2020, the chairs of the QUIC working group organised an interim meeting to better understand the multipath requirements and how they could be included in QUIC. Key presentations during this interim meeting include :

- Christoph Paasch on Multipath at Apple

- Olivier Bonaventure on Hybrid acces networks and requirements on QUIC

- Yanmei Liu and Yunfei Ma on MQUIC use cases

The recordings are also available on https://youtu.be/p-ArboToDmk

SIGCOMM’20 tutorial on Multipath Transport Protocols

During ACM SIGCOMM’20, I gave with Quentin De Coninck a three hours tutorial on Multipath Transport Protocols. During this tutorial, we summarised the most important features of two multipath transport protocols that we co-designed: Multipath TCP and Multipath QUIC. Here are the materials that we prepared for this tutorial:

- Slides for Multipath TCP part (pdf or pptx)

- Slides for Multipath QUIC

- Hands-on materials (vagrant box)

- Tutorial video recording

The vagrant box contains both a Multipath TCP kernel and a recent version of pquic including the multipath plugin. You can use both to experiment with Multipath TCP and Multipath QUIC.

Multipath TCP proxies

- Network operators want to leverage the unique capabilities of Multipath TCP for two main types of use cases:

- aggregate the bandwidth of heterogenous paths such as xDSL and cellular to support Hybrid Access Networks

- seamless handovers and cellular/Wi-Fi offload for smartphones and mobile devices

The Broadband Forum and 3GPP have standardised solutions architectures that rarely on Multipath TCP proxies to support those service since servers rarely support Multipath TCP. We had identified this problem several years ago and proposed efficient Multipath TCP proxies in Multipath in the Middle(Box) in 2013.

To enable network operators to deploy such proxies, it was important to document them in IETF RFCs. Despite the simplicity of the idea, it took several years of effort to convince the IETF of documenting this approach in the 0-RTT TCP Convert Protocol that is now published as RFC 8803.

Open-source networking ebook

For more than a decade and with the help of several Ph.D. and Master students, we have developed a series of open educational resources to help training the next generation of networking students. A recent summary of our effort appears in the paper Open Educational Resources for Computer Networking published in the July 2020 issue of SIGCOMM’s CCR. The CCR Editor summarises our contribution as What distinguishes this textbook from traditional ones is that it not only is it free and available for anyone in the world to use, but also, it is also interactive. Therefore, this goes way beyond what a textbook usually offers: it is an interactive learning platform for computer networking.