Yesterday we presented our recent work at PAM 2021 in which we study the classification performance of NVIDIA Mellanox NICs and uncover some performance bottlenecks. Watch out when relying on hardware offloads, they can be very nice but come with drawbacks when trying to push to many rules or too many tables.

Research will lower data centres energy use

GitLab with Docker : Fixing “Error: PG::ConnectionBad” or “DETAIL: The data directory was initialized by PostgreSQL version 9.6, which is not compatible with this version 11.7.”

An issue I had where I could not find any fix online. The closest help I could find was this blog post : https://gotanbl.com/foss/how-update-gitlab-in-docker/, but there are multiple mistakes in the article, and the author is not reachable to fix them, so I’ll put the main trivia here…

The root of the issue (in my case) is that updating GitLab (with docker at least) is quite cumbersome. If you run it almost every week, then you can always upgrade to “latest”. If you do it from time to time, you have to upgrade from minor to latest minor then to first major, then to latest minor, then first major etc… And there is no way to do that automatically. So sometimes, running the “usual command” won’t work.

The problem which leads to this error is that the Postgresql database version will only be updated on some versions. If you skip the right one, then you’ll never update and all subsequent updates will break…

Worst, you’ll end up being told to run some commands to do this and that… However the problem is that the docker container will die as it fails to start. So you won’t be able to enter those commands.

The solution

First, note your current version:

sudo docket exec -it gitlab bash

cat /opt/gitlab/version-manifest.txt |grep gitlab-ce|awk '{print $2}'Then, stop and remove the container. It’s safe, as the real files, db, etc are kept in the $GITLAB_HOME:

sudo docker exec -t gitlab gitlab-backup create

sudo docker stop gitlab

sudo docker rm gitlabBasically, you’ll have to follow a specific upgrade path that can be found at : https://docs.gitlab.com/ce/update/#upgrade-paths

At the time of writing, this is the path:

8.11.x -> 8.12.0 -> 8.17.7 -> 9.5.10 -> 10.8.7 -> 11.11.8 -> 12.0.12 -> 12.1.17 -> 12.10.14 -> 13.0.14 -> 13.1.11 - > 13.x (latest)So if your version is 11.10, you’ll have to upgrade at 11.11.8, and continue up to the latest.

To update to a version, do the following.

Verify the command to run the container matches what you used to install GitLab in the first place, you should re-use exactly the same command, only the last $VERSION should change:

export GITLAB_HOME=/srv/gitlab

sudo docker run --detach --hostname gitlab.tombarbette.be --env GITLAB_OMNIBUS_CONFIG="external_url 'https://gitlab.tombarbette.be/'; gitlab_rails['gitlab_shell_ssh_port'] = 2022; " --publish 2443:443 --publish 2080:80 --publish 2022:22 --name gitlab --restart always --volume $GITLAB_HOME/config:/etc/gitlab --volume $GITLAB_HOME/logs:/var/log/gitlab --volume $GITLAB_HOME/data:/var/opt/gitlab gitlab/gitlab-ce:$VERSIONThe $VERSION should be the version with “-ce.0”, so for instance 11.11.8-ce.0, this is the docker container version that can be found at https://hub.docker.com/r/gitlab/gitlab-ce/tags?page=1&ordering=last_updated

Normally, when you launch that command the update for postgresql should be done automatically. If somehow you start hearing complaints, you can force the upgrade to the version XXX with:

gitlab-ctl pg-upgrade -v XXXWhere XXX is the database version.

After launching a specific version, you have to wait for GitLab to completely start, to be sure all migration was terminated.

If in troubles, you might want to check the logs with :

sudo docker logs -f gitlabTypically the logs in $GITLAB_HOME starts to be meaningful when this problem is fixed and gitlab completely started, so it was not helpful for me.

So at this point, go back to the version list above and advance one by one…

It may seem crazy, but now to avoid that you have no choices than updating every weeks… Or you’ll have to play with versions again…

Now, I run a cron script that will save and update GitLab every week. Anyway, GitLab is an horror memory-wise and slows down with time. So removing and re-adding the container every week is actually helpful…

#!/bin/bash

sudo docker exec -t gitlab gitlab-backup create

sudo docker stop gitlab

sudo docker rm gitlab

export GITLAB_HOME=/srv/gitlab

sudo docker run --detach --hostname gitlab.tombarbette.be --env GITLAB_OMNIBUS_CONFIG="external_url 'https://gitlab.tombarbette.be/'; gitlab_rails['gitlab_shell_ssh_port'] = 2022; " --publish 2443:443 --publish 2080:80 --publish 2022:22 --name gitlab --restart always --volume $GITLAB_HOME/config:/etc/gitlab --volume $GITLAB_HOME/logs:/var/log/gitlab --volume $GITLAB_HOME/data:/var/opt/gitlab gitlab/gitlab-ce:latest

Our upcoming paper at PAM : “NICBench”

We’re pleased to announce our upcoming paper “What you need to know about (Smart) Network Interface Cards” by G. Katsikas, T. Barbette, M. Chiesa, D. Kostic and G. Maguire Jr. is accepted at PAM’21!

We can’t publish the preprint yet, but we’ll do as soon as possible 🙂

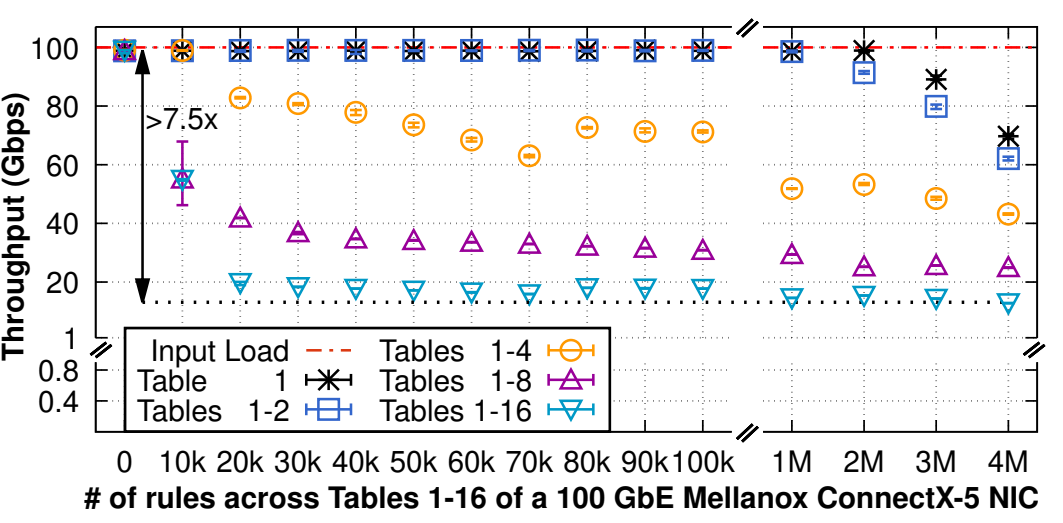

Network interface cards (NICs) are fundamental components of modern high-speed networked systems, supporting multi-100 Gbps speeds and increasing programmability. Offloading computation from a server’s CPU to a NIC frees a substantial amount of the server’s CPU resources, making NICs key to offer competitive cloud services. Therefore, understanding the performance benefits and limitations of offloading a networking application to a NIC is of paramount importance. In this paper, we measure the performance of four different NICs from one of the largest NIC vendors worldwide, supporting 100 Gbps and 200 Gbps. We show that while today’s NICs can easily support multi-hundred-gigabit throughputs, performing frequent update operations of a NIC’s packet classifier — as network address translators (NATs) and load balancers would do for each incoming connection — results in a dramatic throughput reduction of up to 70 Gbps or complete denial of service. Our conclusion is that all tested NICs cannot support high-speed networking applications that require keeping track of a large number of frequently arriving incoming connections. Furthermore, we show a variety of counter-intuitive performance artefacts including the performance impact of using multiple tables to classify flows of packets.

PAM will be held in late March, so stay tuned!

FOSDEM’21: FastClick and beyond…

Early February we presented a talk at FOSDEM, a huge Open-Source gathering with my colleague Alireza Farshin. The video is now released!

In the talk we present FastClick with a short demo, do a round of existing alternative modular framework (VPP and BESS mainly) and then discuss the future of software dataplanes, which we believe our recent work PacketMill starts to address.

We mainly show how FastClick is still really up-to-date with competition and goes beyond sota with PacketMill’s enhancements. We also re-did an experiment at 100G showing how FastClick now improves Click by more than 30x in a forwarding configuration. This is because we continued to maintain FastClick since nearly 6 years now and we do consider pull requests, and integrate recent research while good old Click itself is sadly stalling since a decade now. I will do a blog post about the state of FastClick in the next weeks.

I also bought the www.fastclick.dev domain to start a little showcase website. For now it redirects to GitHub. Feel free to help 🙂

A poster of our latest work, CrossRSS, a Stateless CPU-Aware Datacenter Load-Balancer

Today we will present a poster of our latest work, at CoNEXT’20 : CrossRSS! CrossRSS is a load-balancer that spreads the load uniformly even inside the servers. It uses knowledge of the dispatching done inside the servers, RSS, to purposely select less-loaded cores without any server modification, or inter-core communications on the server. Learn more by watching the short video!

The poster session will be held on the 4th of December, 2:30 CET on the Mozilla VR Hub

Our latest paper “Cheetah”, a load balancer that guarantees per-connection-consistency

Cheetah is a new load balancer that solves the challenge of remembering which connection was sent to which server without the traditional trade off between uniform load balancing and efficiency. Cheetah is up to 5 times faster than stateful load balancers and can support advanced balancing mechanisms that reduce the flow completion time by a factor of 2 to 3x without breaking connections, even while adding and removing servers.

More information at https://www.usenix.org/conference/nsdi20/presentation/barbette.

Dynamic DNS with OVH

It may not be a clear thing, but OVH allows to have your own Dynamic DNS if you rent a domain name, surely a better thing than the weird paid website from dyndns.org. I will explain how to handle the update with Linux using ddclient.

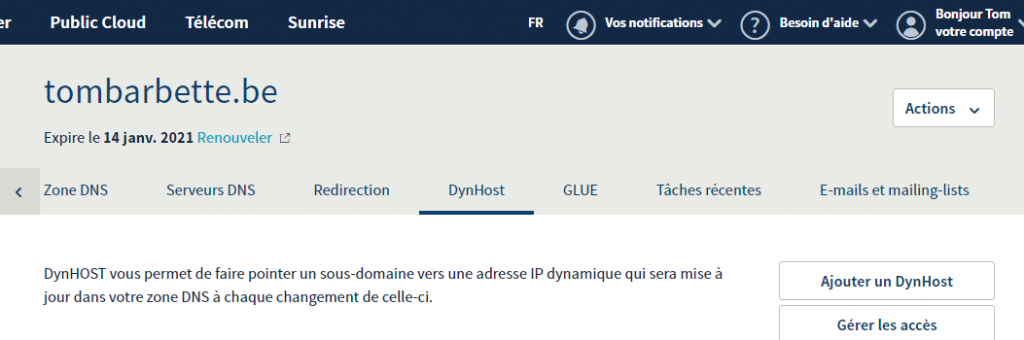

On the manager

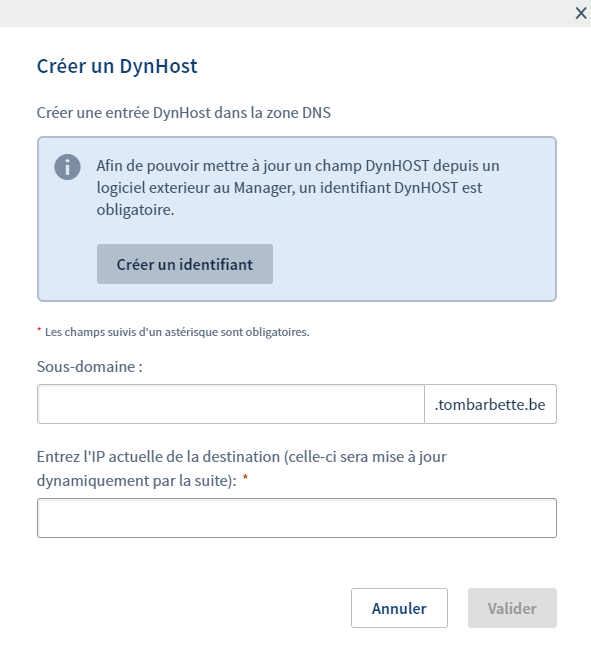

Connect to https://www.ovh.com/manager/web/#/configuration/domain/ , select your domain name, and create a new dynhost with the button on the right.

Enter a sub-domain name such as “mydyn” (.tombarbette.be), and add the actual IP for now, or just 8.8.8.8 for the time being.

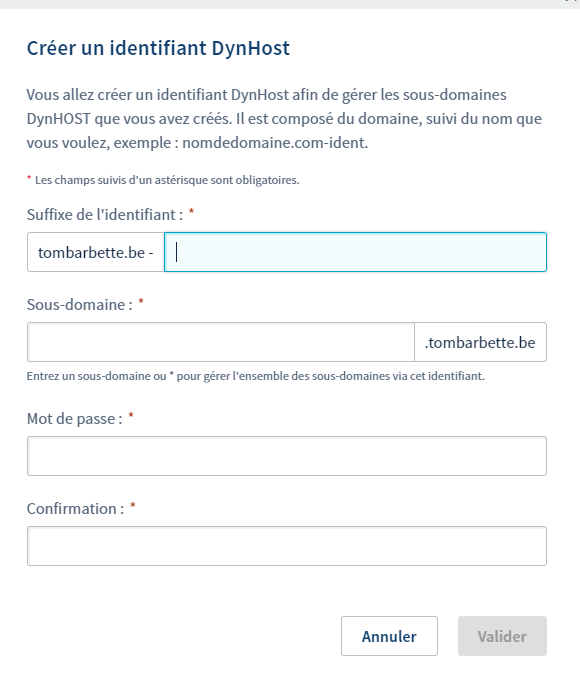

Then it is not finished, you have to create a login that will be able to update that dns entry. Select the second button to handle accesses and create a new login.

On the server

sudo apt install ddclient

Then edit /etc/ddclient.conf

protocol=dyndns2 use=web,web=checkip.dyndns.com server=www.ovh.com login=tombarbette.be-mydns password='password' mydns.tombarbette.be

Just do “sudo ddclient” to update once then “sudo service ddclient restart” to get it updated automatically.

May this be helpful to someone, personally I just forget it all the time so I wanted to leave a post-it somewhere.

Our new paper RSS++: load and state-aware receive side scaling

I’m delighted to announce the publication of our latest paper titled “RSS++: load and state-aware receive side scaling” at CoNEXT’19.

Abstract

While the current literature typically focuses on load-balancing among multiple servers, in this paper, we demonstrate the importance of load-balancing within a single machine (potentially with hundreds of CPU cores). In this context, we propose a new load-balancing technique (RSS++) that dynamically modifies the receive side scaling (RSS) indirection table to spread the load across the CPU cores in a more optimal way. RSS++ incurs up to 14x lower 95th percentile tail latency and orders of magnitude fewer packet drops compared to RSS under high CPU utilization. RSS++ allows higher CPU utilization and dynamic scaling of the number of allocated CPU cores to accommodate the input load while avoiding the typical 25% over-provisioning.

RSS++ has been implemented for both (i) DPDK and (ii) the Linux kernel. Additionally, we implement a new state migration technique which facilitates sharding and reduces contention between CPU cores accessing per-flow data. RSS++ keeps the flow-state by groups that can be migrated at once, leading to a 20% higher efficiency than a state of the art shared flow table.

Our latest paper “Metron: NFV Service Chains at the True Speed of the Underlying Hardware” has been accepted at NSDI 2018 !

Abstract

In this paper we present Metron, a Network Functions Virtualization (NFV) platform that achieves high resource utilization by jointly exploiting the underlying network and commodity servers’ resources. This synergy allows Metron to: (i) offload part of the packet processing logic to the network, (ii) use smart tagging to setup and exploit the affinity of traffic classes, and (iii) use tag-based hardware dispatching to carry out the remaining packet processing at the speed of the servers’ fastest cache(s), with zero intercore communication. Metron also introduces a novel resource allocation scheme that minimizes the resource allocation overhead for large-scale NFV deployments. With commodity hardware assistance, Metron deeply inspects traffic at 40 Gbps and realizes stateful network functions at the speed of a 100 GbE network card on a single server. Metron has 2.75-6.5x better efficiency than OpenBox, a state of the art NFV system, while ensuring key requirements such as elasticity, fine-grained load balancing, and flexible traffic steering.